The CTO’s Guide to Shipping AI in 90 Days

Enterprises are under pressure to turn AI initiatives into measurable business outcomes. Many pilots stall due to fragmented execution, unclear ownership, and lack of governance. This guide provides a disciplined 90-day framework to deploy AI as a production-grade capability, fully integrated into workflows and accountable for tangible financial and operational results.

A Production-Grade Execution Framework for Enterprise AI

AI is no longer treated as an experimental innovation initiative inside large organizations. It is now a board-level mandate directly tied to revenue growth, cost optimization, competitive positioning, and operational resilience.

However, inside most enterprises, execution maturity does not match strategic ambition. Organizations frequently face fragmented pilots, undefined ownership structures, compliance uncertainty, and disconnected experimentation that never reaches production scale.

The issue is not a shortage of ideas or vendor proposals. The issue is the absence of a structured, accountable, production-grade execution model.

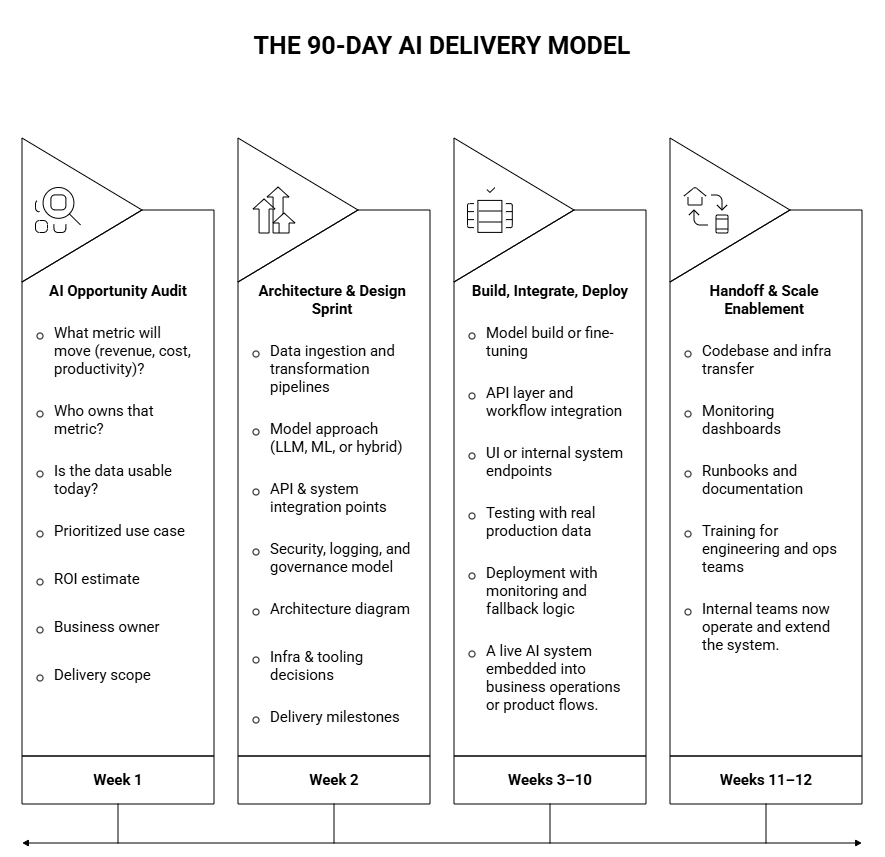

This guide outlines a disciplined 90-day AI delivery framework designed for enterprises that require measurable financial outcomes, operational control, governance alignment, and structured ownership transfer.

What “Production AI” Actually Means

Production AI is not a demo.

It is not a proof of concept.

It is not a chatbot on a landing page.

Production AI means:

- Deployed into live business workflows

- Integrated with CRM, ERP, internal systems, or product stack

- Backed by SLA and observability

- Monitored for drift and performance degradation

- Governed under security and compliance policies

- Financially accountable to a business owner

If these conditions are not met, the system is still a pilot.

Why Enterprise AI Programs Stall

Across large organizations, AI programs consistently stall for structural and operational reasons rather than technical limitations.

Production Architecture Is Not Defined Early

Organizations often select use cases before understanding integration constraints, data limitations, or infrastructure dependencies. Without early architectural clarity, projects face rework, delays, and unexpected security or compliance objections.

Enterprise AI must begin with system-level thinking rather than model-level experimentation.

No End-to-End Delivery Capability Exists

Many teams possess data scientists but lack platform engineers, security architects, and integration specialists required for production deployment. Building a model is only a fraction of the work; integrating it reliably into enterprise workflows is significantly more complex.

Without cross-functional execution capability, initiatives stall between prototype and operational system.

Financial Ownership Is Undefined

AI projects often lack a clearly assigned executive accountable for financial impact and performance outcomes. When no single business leader owns the P&L implications, scaling and prioritization become politically fragmented.

Enterprise AI requires named ownership tied to measurable quarterly business targets.

Workflow Adoption Is Ignored

If AI systems require users to significantly alter behavior or switch tools, adoption resistance increases dramatically. Successful AI systems embed directly into existing workflows and reduce friction rather than create new operational burdens.

Technology alone does not guarantee usage; design alignment determines adoption.

Governance and Compliance Reviews Create Delays

Large enterprises must navigate data privacy reviews, legal approvals, vendor risk assessments, and security validation cycles. When governance considerations are introduced late in the process, projects experience significant delays and budget overruns.

Governance must be embedded into the execution model from the beginning.

How High-Performing Enterprise AI Teams Operate

Organizations that successfully deploy AI in production follow disciplined operating principles rather than experimental patterns.

One Use Case Tied to One Metric

High-performing teams focus on a single high-impact use case directly mapped to a measurable business KPI. This disciplined focus prevents fragmentation and ensures that all stakeholders align around a shared financial objective.

Architecture Defined Before Build

Before any model development begins, teams define data flows, system integration patterns, infrastructure constraints, and security boundaries. This architectural clarity prevents rework and accelerates reliable deployment into enterprise environments.

Financial Accountability Established Upfront

Baseline metrics and projected ROI are defined before sprint execution begins, ensuring transparency and executive confidence. Performance tracking mechanisms are built into the system from day one to validate financial assumptions.

Structured Sprint Cadence with Governance Gates

Weekly execution cycles include structured technical reviews, security validations, and stakeholder checkpoints. This governance discipline ensures that architectural, compliance, and business considerations remain aligned throughout development.

Clear Internal Ownership Transfer Model

AI systems are deployed with documentation, monitoring dashboards, retraining procedures, and operational runbooks. Ownership is gradually transitioned to internal teams, ensuring sustainability beyond external partner involvement.

AI is treated as a product capability embedded into enterprise architecture, not as a temporary consulting engagement.

Enterprise AI Governance and MLOps Framework

Enterprise-grade AI requires lifecycle management beyond initial deployment. Dataset versioning ensures reproducibility and traceability of training data over time. Prompt version control enables structured iteration and rollback in LLM-based systems.

Performance monitoring tracks accuracy, latency, and user behavior continuously in production environments. Drift detection mechanisms identify degradation caused by data shifts or operational changes.

Audit logs and role-based access controls enforce compliance with security and regulatory requirements. Incident response playbooks define escalation procedures for model failures or unexpected outputs.

Production AI without governance and monitoring introduces unacceptable enterprise risk.

How CTOs Should Evaluate ROI

Every AI initiative must map directly to a measurable financial or operational KPI.

Revenue-focused initiatives may include AI-powered product features, sales copilots improving conversion rates, or customer intelligence systems enhancing upsell effectiveness. Cost reduction initiatives may involve support automation, document processing, or workflow streamlining in operational departments.

Productivity-focused initiatives can include engineering copilots, internal knowledge assistants, or decision-support systems reducing manual analysis time.

For example, support automation handling 60 percent of 50,000 monthly tickets at four dollars per ticket generates substantial annual savings. However, enterprise ROI modeling must account for infrastructure costs, monitoring systems, human oversight, and retraining cycles.

Gross savings are attractive; net operational impact determines sustainability.

Selecting the Right AI Use Case

Enterprises should evaluate initiatives across business impact, data readiness, implementation complexity, regulatory exposure, and organizational change load. High-impact, low-complexity initiatives should be prioritized for immediate execution to generate early momentum.

High-impact but high-complexity initiatives may require phased strategic investment with extended governance planning. Low-impact initiatives should be deprioritized to prevent resource dilution.

Disciplined prioritization prevents pilot overload and ensures measurable enterprise value.

Build vs Buy vs Partner

Build When:

- AI is core intellectual property

- You have mature AI and platform teams

- Long-term strategic differentiation is required

Buy When:

- Use case is standardized

- Vendor solutions meet security requirements

- Minimal integration complexity

Partner When:

- You require production deployment in 90 days

- Internal AI capability is emerging

- You need architecture design + delivery rigor

- You want structured internal ownership transfer

Most enterprises succeed with partner-led execution followed by controlled internal handover.

Organizational Change Management

Technology implementation alone does not guarantee business impact; incentive alignment drives adoption. Executive sponsorship must reinforce AI usage through performance metrics and accountability structures.

Training programs should ensure users understand system capabilities, limitations, and expected workflows. Performance management systems may require adjustment to reflect AI-assisted processes.

AI initiatives fail more frequently due to behavioral resistance than technical shortcomings.

What Enterprise Leaders Should Demand

Before approving any AI investment, leaders should demand clarity on financial metrics, ownership accountability, production architecture, governance frameworks, monitoring strategies, and post-launch operational models.

If these elements are undefined, the initiative lacks production readiness.

Request a Tailored AI Execution Roadmap

Bluetick Consultants works with enterprise product and operations leaders to identify high-impact AI opportunities grounded in measurable business value. The engagement includes production-grade architecture design, governance alignment, and realistic ROI modeling.

Within 90 days, organizations receive a deployed AI system embedded into workflows, supported by monitoring frameworks and structured internal ownership transfer.

AI is not a strategy presentation. It is a deployed capability that produces measurable, governed, and sustainable business impact.